The idea of bringing a microphone into your home attached to one of the most powerful retail entities on the planet has always seemed a little creepy for many Americans, and with good reason.

You see, when Google and Amazon want to listen in on you and your family, the reason is never good. Sure, it’s convenient to be able to tell a device to play your favorite song, or to add something to the grocery list, but it’s impossible to do so without allowing these devices to harvest all sorts of data about you and your household.

And, worse still, these devices aren’t terribly secure, as some Google users discovered this week.

According to a recent report, a bug in Google Home smart speakers allowed for the installation of a backdoor account that could be used to control the device and access its microphone feed. In short, hackers could take over Google’s devices to spy on users by listening in on their conversations.

Bleeping Computer reports that a vulnerability in Google Home smart speakers allowed the creation of a backdoor account that could be used to remotely control the device and access its microphone feed, potentially turning it into a spying tool.

The deft trick became a boon for its discoverer.

The flaw was discovered by researcher Matt Kunze, who received a $107,500 reward for responsibly reporting it to Google in the previous year. Kunze published technical details and an attack scenario illustrating the exploit late last week.

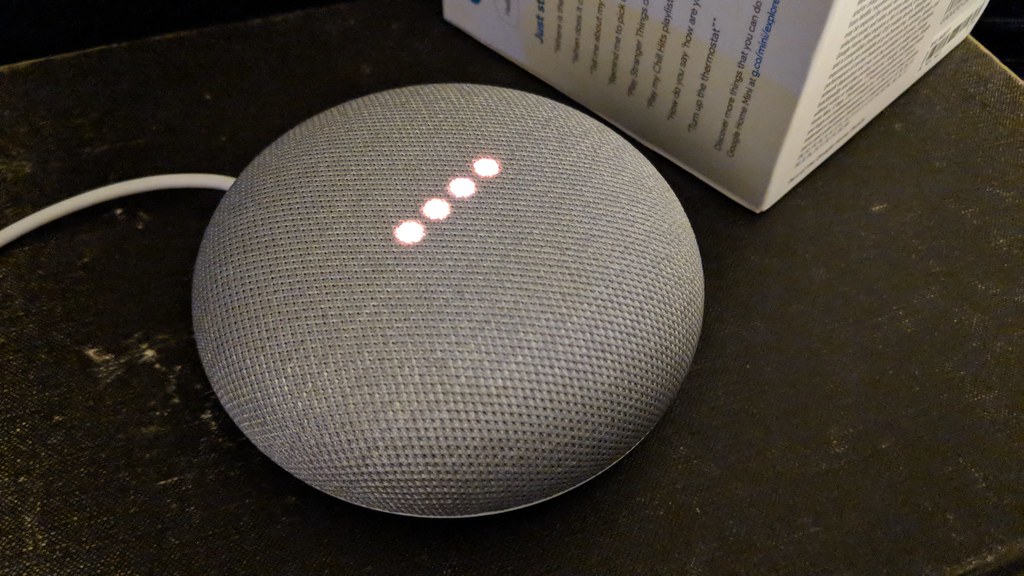

During his experimentation with a Google Home Mini speaker, Kunze discovered that new accounts created using the Google Home app could remotely send commands to the device through the cloud API. In order to capture the encrypted HTTPS traffic and potentially obtain the user authorization token, the researcher used a Nmap scan to locate the port for the local HTTP API of Google Home and set up a proxy.

Kunze found that adding a new user to the target device involves two steps: obtaining the device name, certificate, and “cloud ID” from its local API. This information makes it possible to send a link request to the Google server. To add an unauthorized user to a target Google Home device, Kunze implemented the linking process in a Python script that automated the extraction of local device data and reproduced the linking request.

The creepy realization could keep a vast number of Americans from installing a voice-activated home assistant for fear of being spied on by God knows who.